Blog

What’s really driving Australia’s housing conversation right now?

Explore how housing in Australia has become a nationally entrenched issue where audiences participate in shaping conversation as much as the policymakers.

This is the “wpengine” admin user that our staff uses to gain access to your admin area to provide support and troubleshooting. It can only be accessed by a button in our secure log that auto generates a password and dumps that password after the staff member has logged in. We have taken extreme measures to ensure that our own user is not going to be misused to harm any of our clients sites.

If there’s one topic Australians never tire of debating, it’s housing. Whether it’s at the pub, around the dinner table, or dominating headlines, property prices, rent hikes and the “can I ever afford a home?” questions are constant fixtures of the national conversation.

But let’s be honest—rising house prices aren’t new. What is changing is how the conversation is evolving, who’s shaping it, and which narratives are starting to stick.

| Using Lumina’s Stories and Perspectives, we analysed 19 stories and over 50 perspectives across a 30-day period from 15 March to 14 April 2026 to understand what’s actually driving the housing narrative in Australia right now—and why it matters. |

▸ Housing Supply and Affordability Divide — Analysts and economists link supply shortages directly to soaring prices. Cities that built more homes saw far less price growth. Key drivers: Gerard Burg (Cotality), Peter Tulip (Centre for Independent Studies), Australian Associated Press ▸ Tax Reform Debates Heat Up Ahead of Budget — 14 competing perspectives. Advocates say reforms are essential for fairness; the property industry warns they’ll push rents up 30%. Key drivers: Anthony Albanese, Jim Chalmers, Angus Taylor, Housing Industry Association, Saul Eslake ▸ Grattan Institute Connects Housing to Democratic Trust — A major report argues that the housing crisis is eroding public confidence in democracy itself. Key drivers: Aruna Sathanapally, Grattan Institute |

This perspective was 100% of the coverage of this story and generated 85 media items, making it the most widely covered story of the entire period. The main insight is the public drawing a direct line between housing supply levels and property prices across Australia’s capital cities.

Perth and Brisbane, where home construction has lagged well behind population growth since the pandemic, have seen property values surge massively. Meanwhile, Victoria — which built a proportionally higher number of new homes — saw less growth, compared to the national average.

It ran everywhere from PerthNow to regional papers across NSW and Victoria. The fact that the Australian Associated Press syndicated the data meant it hit dozens of outlets simultaneously.

[/et_pb_text][et_pb_image src="https://www.isentia.com/wp-content/uploads/2026/04/2-1-scaled.png" title_text="2" _builder_version="4.27.4" _module_preset="default" width="68%" max_width="68%" module_alignment="center" custom_margin="||60px|||" global_colors_info="{}"][/et_pb_image][et_pb_text _builder_version="4.27.4" _module_preset="default" text_font="DM Sans||||||||" header_font="DM Sans||||||||" header_3_font="DM Sans|700|||||||" global_colors_info="{}"]

The key drivers are property analysts Gerard Burg from Cotality and Peter Tulip from the Centre for Independent Studies. Both are pushing the same message. If you want to fix affordability, you have to fix supply. Their proposed solution is liberalising zoning laws, particularly in NSW and Victoria, to allow more homes to be built faster.

This story had the widest media footprint of the entire period, reaching outlets from The West Australian to regional mastheads across the country. If your organisation operates in housing, property, or urban planning, the “supply-equals-affordability” narrative is now firmly established in public discourse, and therefore, your messaging needs to account for it. Audiences know of the supply argument before, and with experts aligned on the issue, it’s harder for policymakers to dismiss it easily.

It’s also worth noting how the analysis around who the key drivers are adds a layer traditional media monitoring might miss. The AAP’s role as the primary distribution channel meant this story reached dozens of the bigger mastheads like PerthNow and The West Australian and hyperlocal outlets like the Cobram Courier and Benalla Ensign, simultaneously. For communicators, this distribution pattern indicates that a story has penetrated both metropolitan and regional audiences, making it impossible to dismiss as just a capital-city concern.

The housing tax reform debate was the most contested generating 14 distinct perspectives across 23 media items becoming by far the most multi-sided story of the month. However, the top three perspectives were the most interesting to look at considering how disputed the opinions of either side are and sit at the highest level in the government.

At the centre of it is the Albanese Government’s consideration of reducing the capital gains tax discount and limiting negative gearing ahead of the May budget. The country is essentially split down the middle on this one.

Perspective 1: This made up for 34.8% of the story coverage. Prime Minister Anthony Albanese, Treasurer Jim Chalmers, and housing advocacy group Everybody’s Home are arguing that the current system unfairly benefits wealthy investors while locking out first-home buyers. Economist Saul Eslake backs this view. Together, they account for about a third of the story’s total coverage.

Perspective 2: This had an equal share in coverage at 34.8% of the story. Opposition figures Angus Taylor, the Housing Industry Association, and Victorian Libertarian Party Leader David Limbrick are warning that scrapping these tax incentives will scare off investors, shrink rental supply, and push rents up by as much as 30%. They command an equal share of the conversation (Herald Sun).

[/et_pb_text][et_pb_image src="https://www.isentia.com/wp-content/uploads/2026/04/image-11.png" title_text="image" _builder_version="4.27.4" _module_preset="default" width="60%" max_width="60%" module_alignment="center" custom_margin="||72px|||" global_colors_info="{}"][/et_pb_image][et_pb_text _builder_version="4.27.4" _module_preset="default" text_font="DM Sans||||||||" header_font="DM Sans|700|||||||" header_3_font="DM Sans|700|||||||" global_colors_info="{}"]What’s interesting is what sits beneath these two dominant perspectives. A third angle that was 17.4% of the story coverage was driven by Chalmers and Greens Senator Nick McKim, frames the whole debate as a question of intergenerational fairness. And then there are the young “rentvestors” who rent where they live but own an investment property elsewhere. They’re worried about getting caught in the crossfire of changes that weren’t designed with them in mind (Australian Financial Review).

The Grattan Institute released a report warning that trust in Australian democracy is under pressure, and housing is one of the reasons why. This soon became the second biggest story, generating 58 media items.

Led by Grattan CEO Aruna Sathanapally, the report argues that persistent inequality, including the housing affordability gap, is eroding the social contract between citizens and government. The report explicitly names the housing crisis as one of the major unresolved challenges fuelling public disillusionment. Sathanapally is the key driver of this story, commanding over 93% of its coverage. Her influence matters because she’s reframing housing as something bigger than an economic problem. She’s positioning it as a threat to democratic stability. That’s a powerful narrative shift, and one that gives housing advocates a new way to make their case.

For anyone in public affairs or government communications, this connection between housing and democratic trust is worth watching. It’s the kind of framing that can reshape how policymakers prioritise the issue.

[/et_pb_text][et_pb_image src="https://www.isentia.com/wp-content/uploads/2026/04/image-9.png" title_text="image" _builder_version="4.27.4" _module_preset="default" width="60%" max_width="60%" module_alignment="center" custom_margin="||92px|||" global_colors_info="{}"][/et_pb_image][et_pb_text _builder_version="4.27.4" _module_preset="default" text_font="DM Sans||||||||" header_font="DM Sans|700|||||||" header_3_font="DM Sans|700|||||||" min_height="763px" global_colors_info="{}"]

It’s not a new crisis anymore. It’s a nationally entrenched issue that is now being addressed by the public by way of debates along with policymakers and experts at the highest government level. These debates are on solutions, trade-offs and fairness. The conversation is much more sophisticated where audiences are not just talking about “prices being too high”, but discussing supply, investments, short term relief vs long term reform. What’s also essential is to look at the key drivers or the key voices driving the top narratives. From economists to policymakers to advocacy groups, the voices gaining traction are influencing how the issue is understood and what solutions feel viable.

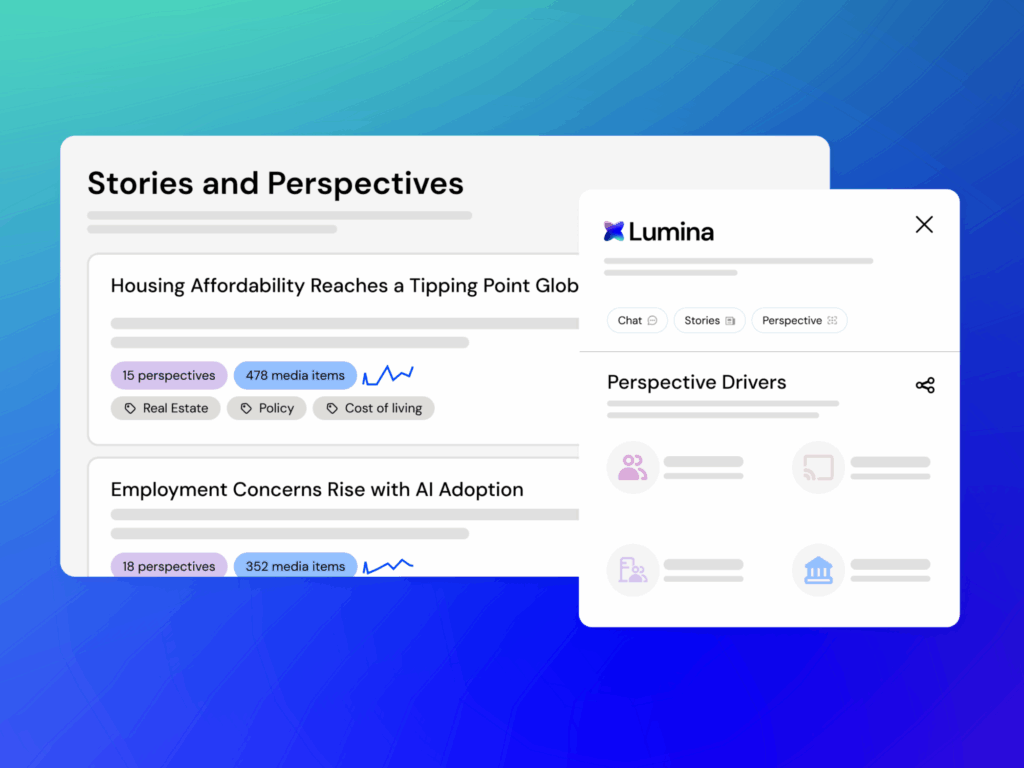

Understanding not just what’s being said, but who is driving the conversation and why it’s resonating, is becoming critical for organisations looking to engage credibly. That’s where Lumina’s Stories and Perspectives comes in, helping you move beyond headlines to uncover the narratives and voices shaping the issues that matter most.

Want to see these insights for your own industry or brand? Discover what Lumina Stories and Perspectives can surface for you.

[/et_pb_text][et_pb_sidebar _builder_version="4.27.4" _module_preset="default" global_colors_info="{}"][/et_pb_sidebar][/et_pb_column][/et_pb_row][/et_pb_section][et_pb_section fb_built="1" _builder_version="4.27.4" _module_preset="default" global_colors_info="{}"][/et_pb_section]" ["post_title"]=> string(65) "What's really driving Australia's housing conversation right now?" ["post_excerpt"]=> string(155) "Explore how housing in Australia has become a nationally entrenched issue where audiences participate in shaping conversation as much as the policymakers. " ["post_status"]=> string(7) "publish" ["comment_status"]=> string(4) "open" ["ping_status"]=> string(4) "open" ["post_password"]=> string(0) "" ["post_name"]=> string(62) "whats-really-driving-australias-housing-conversation-right-now" ["to_ping"]=> string(0) "" ["pinged"]=> string(0) "" ["post_modified"]=> string(19) "2026-04-29 06:09:55" ["post_modified_gmt"]=> string(19) "2026-04-29 06:09:55" ["post_content_filtered"]=> string(0) "" ["post_parent"]=> int(0) ["guid"]=> string(32) "https://www.isentia.com/?p=45983" ["menu_order"]=> int(0) ["post_type"]=> string(4) "post" ["post_mime_type"]=> string(0) "" ["comment_count"]=> string(1) "0" ["filter"]=> string(3) "raw" }Explore how housing in Australia has become a nationally entrenched issue where audiences participate in shaping conversation as much as the policymakers.

The media landscape is accelerating. In an era where influence is ephemeral and every angle demands instant comprehension, PR and communications professionals require more than generic technology—they need intelligence engineered for their specific challenges.

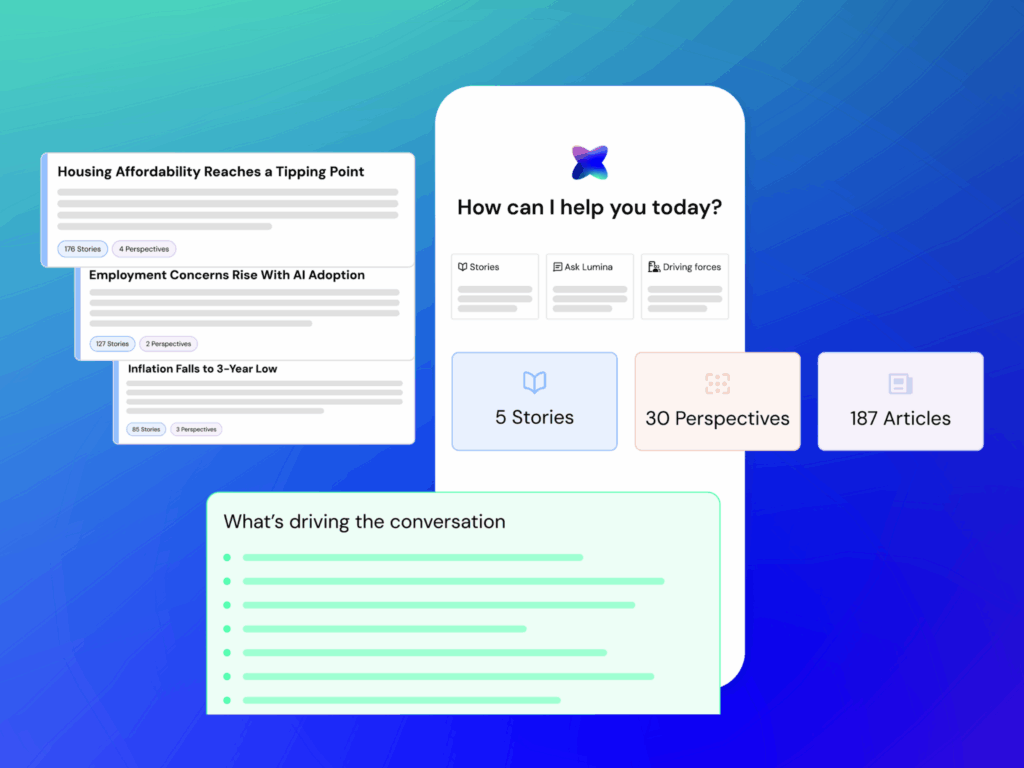

Isentia is proud to introduce Lumina, a groundbreaking suite of intelligent AI tools. Lumina has been trained from the ground up on the complex workflows and realities of modern communications and public affairs. It is explicitly designed to shift professionals from passive media monitoring back into the role of strategic leaders and pacesetters.

“The PR, Comms and Public Affairs sectors have been experimenting with AI, but most tools have not been built with their real challenges in mind.” said Joanna Arnold, CEO of Pulsar Group.

“Lumina is different; it is the first intelligence suite designed around how narratives actually form today, combining human credibility signals with machine-level analysis. It helps teams understand how stories evolve, filter out noise and respond with context and confidence to crises and opportunities.”

Lumina is centered on empowering, not replacing, the human element of communications strategy. This suite is purpose-built to help PR, Comms, and Public Affairs professionals significantly improve productivity, enhance message clarity, and facilitate early risk detection.

Lumina enables communicators to:

We are launching the Lumina suite by making our first module immediately available: Stories & Perspectives.

In the current fragmented, multi-channel media environment, communications professionals need to be able to instantly perceive not just how a story is growing, but also how it is being perceived across different stakeholder groups.

Stories & Perspectives organizes raw media mentions into clustered, cohesive Stories, and the Perspectives that exist within each, reflecting distinct media, audience, and public affairs angles. This unique functionality allows users to:

"Media isn’t a stream of mentions," said Kyle Lindsay, Head of Product at Pulsar Group. "But rather a living system of stories shaped by competing perspectives. When you can see those structures clearly, you gain the ability to understand issues as they form, anticipate how they’ll evolve, and act with precision. That’s what we mean when we talk about AI built for communicators, and that's what an off-the-shelf LLM can't give you."

The launch of Stories & Perspectives is the first release of many. Over the upcoming months, we will systematically roll out the full Lumina roadmap, introducing a comprehensive set of AI tools engineered to handle every phase of the communications lifecycle.

The full Lumina suite will soon incorporate:

Want to harness the power of Lumina AI for your PR, Comms, or Public Affairs team? .

Complete the form below to register your interest.

Get in touch or request a demo.